A set of constructs and methods introduced and described in the book:

Netlab Loligo will improve the ability of systems constructed with them to adapt to current short-term situations, and learn from those short-term experiences over the long term.

How do we, as biological organisms, manage to keep so much finely detailed information in our brains about how to respond to any given situation? That is, how do we manage to keep countless tiny intricacies stored away in our “subconscious” ready to be called upon at just the right time, right when we need them in the present moment?

According to this theory of learning, the answer to that question is: We don't.

Instead, our long term connections—those that immediately drive our responses at all times—are only concerned with getting us started in any given “present.” Responses stored in long-term connections start us along a trajectory that makes it easier for us to learn whatever short-term, detailed responses are needed for any given detailed situation.

Connections that drive short-term responses, on the other hand, form spontaneously in-the-moment, and quickly adapt to whatever present situation we currently find ourselves in. Just as significantly, connections driving short-term responses tend to dissipate as quickly as they form. This theory essentially says that each connection in the brain that drives responses (physical or internal) includes multiple distinct connection strengths, which each increase and decrease at different rates of speed.

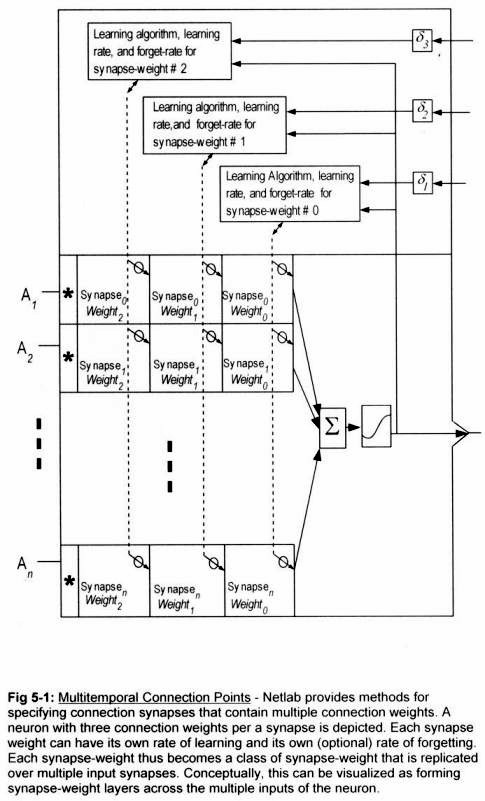

Multi-temporality is achieved in Netlab's simulation environment by providing multiple weights per a connection point (i.e.,

synapse), which are referred to as Multitemporal

[Note 1] synapses.

Multitemporal synapses employ multiple

weights. Each of the multiple weights associated with a given synapse represents a connection strength, and can be set to acquire and retain its strength at a different rate from the others. The methods also specify

Weight-To-Weight Learning, which is a means of teaching a given weight in the set of multiple weights, using the value of other weights from the same connection. Together these constructs provide all the functionality required to model the theory of learning discussed above.

Following is a graphic excerpted from the book: Netlab Loligo, which shows a neuron containing three different weights for each connection point. Each weight is given its own learning algorithms, with its own learning-rate, and forget-rate.

Stand Out Publishing

Stand Out Publishing